Classroom

The hour approaches…

- Which One Doesn’t Belong?

This is Which One Doesn’t Belong?, a website dedicated to providing thought-provoking puzzles for math teachers and students alike. There are no answers provided as there are many different, correct ways of choosing which one doesn’t belong. Enjoy!

- Solving word problems using tape diagrams – The Other Math

Tape diagrams are especially useful for modeling addition, subtraction, multiplication, division, fractions, and ratios/proportions.

might work in wel lwith Number Talks, or be a break from them. - Those Circle Things – Blue Cereal Education Nice way to start discussion, 4 things, which one is different… no right answer..

You probably figured out pretty quickly that there’s usually more than one solution to which one doesn’t fit and why. These aren’t multiple choice questions with one objectively “correct” answer. It’s the process of examining each item in comparison with the others that forces us to review what we already know, reinforces the information we use to argue whatever point we choose to make as a result, stretches our understanding of each item a bit, and lays the groundwork for actual analysis and argument should we eventually go there.

Maps

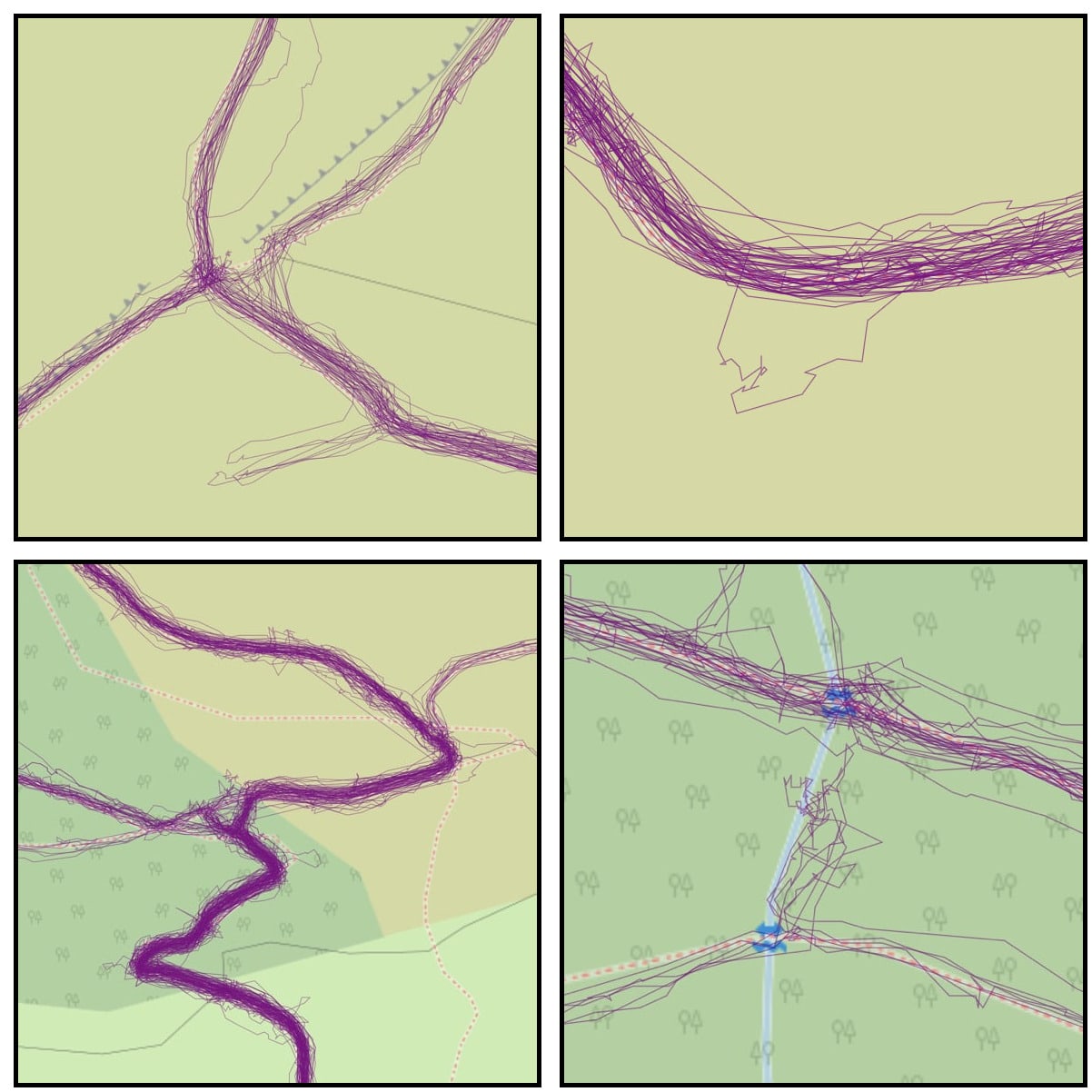

- overpass turbo

“…a web-based data filtering tool for OpenStreetMap”

HT Joe. I really like Open Street Map, this makes me like it more.

WordPress

- WordPress Playground

Experience a WordPress that runs entirely in your browser.

…WordPress Playground makes WordPress instantly accessible for users, learners, extenders, and contributors.And

Even more insane and amazing, Ella van Durpe used WordPress Playground as the basis for a new note-taking app for iOS and macOS. Blocknotes is a stripped down version of WordPress that lets you create notes like you would create posts in WordPress.

Fun

- Town Square

Town Square is a generative sculpture, animated with slow-paced movement. The work is code-based and runs live in the browser. A random seed was chosen by the artist, cementing the generative shape of the sculpture. A looping video file for exhibition purposes can be provided upon request. Made with html, css, javascript, and three.js. Creator Anna Lucia

I am often impressed with various html/css/javascript art ideas, this is a lovely one. I’ve occasionally taken baby steps, usually in response to the Daily Create.

- Garry Knight: “Using AirPods as a Hearing Aid…” – Toot.Cat. I might get some AirPods and try this, my hearing is getting a bit worse with age…

- Let Me Google That I recall an old flash thing that did this. Just need a silly question now…